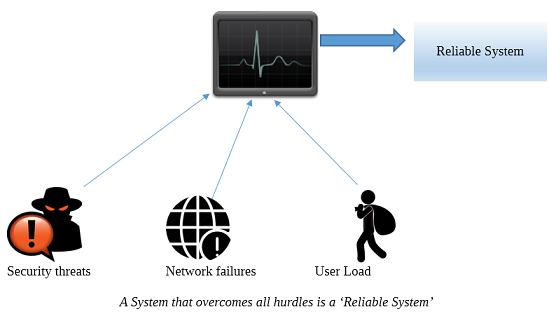

In software systems, reliability means how far the system is able to deliver the required functionality with the best performance. It aims to predict through various techniques whether a system is dependable and robust enough to sustain through the extreme phases during its entire lifetime.

The process of statistical reliability measures the performance of a system through test scores and based on the scores it evaluates the degree of reliability of the product. The test is run repeatedly to ascertain the output of the software system. If the results produced are same all across then the system is a reliable one, but if it doesn't then the possible causes of error should be rectified.

Estimating reliability is a way to ascertain whether the difference is due to errors in measurement or variability in test scores. There are practically four methods of estimating test reliability.

This aims to check for consistency of results across the different items within the same test. It is the estimation of how well the different items work in collaboration with one another within the same system, and produce the same result.

Internal consistency includes various types of methods -

Different reliability models have their own unique way of assessing reliability parameter of a software system, each of them having their own advantages and disadvantages. Inter rater is considered to be the best among other methods. Each method offers a quantifiable way in which we can estimate the reliability of a software system. It is efficient in making an estimate about the system's performance. The term statistic is used because we decide a system's capability on the basis of the scores produced by each technique.

Advertisement: